Screen time is families’ biggest tension. Here are 5 reforms they need

In What the Future issues on Teens and Parenting, the biggest tensions in the household were around screen time and online behavior. Having tech so prevalent in kids’ lives is uncharted waters, and safety is a concern. The Family Online Safety Institute (FOSI) is a nonprofit funded by member organizations that include many of the largest tech and gaming companies. Its state policy lead, Marissa Edmund, explains how informed policies and education can empower families to efficiently manage digital risks and benefits to ensure privacy, safety and balanced screen time.

Matt Carmichael: From previous What the Future issues (and my own house!) we know there are lots of challenges with technology to address as a family. Can policy help?

Marissa Edmund: Definitely. Policymakers are hearing from their constituents that this is an issue. On all levels, everyone wants to grapple with the topic of kids’ screen time and safety, but policymakers need to understand how technology works. They're working on hundreds of issues, and they don't always have that baseline education on how technology works. There’s a difference between a social media platform, a gaming system or a website.

Carmichael: I suspect education is important for the family, too.

Edmund: First is ensuring that parents know the types of platforms that their kids are using. At FOSI, we have a “Platforms Explained” series that says in very layman's terms what each of these platforms does. Then, instead of just a complete rejection of the platform, parents can understand what it is. Next is having media and digital literacy programs within schools so children are learning what is safe and appropriate behavior online and how to identify what's misinformation and disinformation.

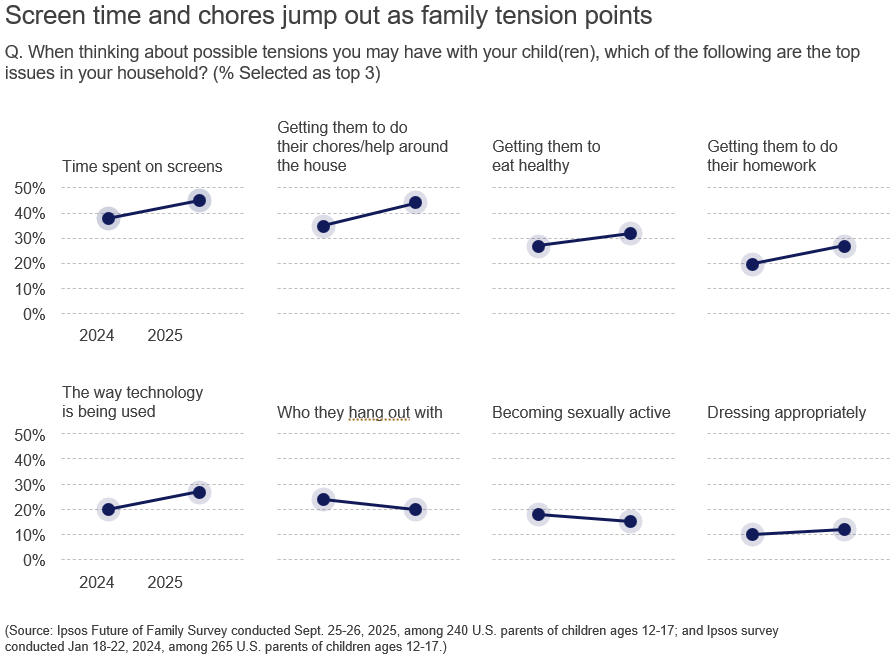

[CHART: Screen time and chores jump out as family tension points]

Carmichael: As a parent, it’s hard to keep up and I don’t often feel like I have the tools to do so.

Edmund: Something that's super helpful is ensuring that users can easily access those tools that help create the experiences they want to have online. Your kid might say they are interested in a gaming platform and there's a chat feature. Can parents turn this chat feature off? Being able to access some of those user controls is very important so it gives agency to the user, and they're able to have the experience online that works for them.

Carmichael: What would be the most helpful policy shift?

Edmund: We would like to see a federal data privacy law so we can start implementing online safety measures that could help protect kids online. Many states already have implemented their own privacy laws. But a baseline federal data privacy law would be helpful so users can feel comfortable that their data is protected, that they can trust platforms, and that their data is being protected while they engage with these platforms.

We want to protect kids on the internet, not from the internet.”

We want to protect kids on the internet, not from the internet.”

Carmichael: There are three legs to all of this: time spent on screens, privacy and actual safety. How do you balance those priorities?

Edmund: We want to protect kids on the internet, not from the internet. We want to shield our kids from the worst of online experiences. Beginning in that middle school, high school age, the training wheels come off metaphorically in the digital space.

We would love to see platforms advertise their parental and user controls so we're able to block unwanted contact and folks that we don't want, or block certain features rather than entire platforms. As children age, they should have a trusted adult to speak with if something happens online and be able to use those controls for themselves.

Carmichael: AI is driving a lot of fear in privacy and safety. What kind of policies do you think we need as these technologies proliferate?

Edmund: We hope any legislation that comes out is rooted in research and not fear-based. Collaboration among all of those involved, including parents and families will help to ensure that industry is being held accountable and is being responsible about the technologies they’re building. It will also help ensure that policymakers are responsive to what parents and families want.

I believe that smaller, more narrowly focused pieces of legislation are going to be most effective.

← Read previous | Read next → |

For further reading

- Parental controls for online safety are underutilized, new study finds

- How technology is reshaping family dynamics and parenting in the future

- What the Future: Teen

Download the full What The Future: Family issue

Download the full What The Future: Family issue