What it will take to help people trust AI for democracy

One question that gets asked a lot is “Can’t we use AI to fight disinformation rather than just create it?” And, of course, the answer is yes. Ginny Badanes leads a program at Microsoft called Democracy Forward. It’s working to use technology to protect democratic institutions like elections campaigns, promote civic engagement and defend against disinformation. Like Cisco’s Annie Hardy, Badanes thinks a lack of trust in institutions is a key issue plaguing us today — and could get worse in the future.

Matt Carmichael: Deepfake tech is impacting not just elections but all kinds of industries. Are we entering an online security arms race?

Ginny Badanes: Industry researchers who were working on this really found that you can’t ever get to a point of reliability with deepfake detection because the technology is getting so much better so quickly. We should be moving toward more labeling, which is a broad term. On one hand we’re talking about labeling of authentic material, and then on the other we're talking about labeling of synthetically-created material.

Carmichael: Why are people so willing to believe disinformation in the first place?

Badanes: Everything starts from the loss of trust. We’re already in an environment where even without AI-generated imagery or content, people have lost their trust in what they can see. We've really been focused on how can you give people more information about the publisher or the site that they're reading that allows them to make their own determinations and decisions about what they're reading or consuming. In giving more information to readers, in theory, they should be able to develop trust on their own terms and not feel like they're being told what to trust and not trust.

Carmichael: But then there’s the behavioral science problem of how we trust the things that we believe to be true anyway.

Badanes: That’s why this disinformation cycle is just so strong because people are inclined to believe something that they already believe. That’s really where we need to hold the publishers of this information to account. There’s nothing wrong with opinion journalism, but let’s just be clear that it’s an opinion versus a fact.

Carmichael: How do we balance the responsibility between creators and believers of disinformation?

Badanes: It’s a shared responsibility. I’ve been thinking through this disinformation challenge in terms of the intervention points to get us to a place where there will still be murky information in the environment, but it’s manageable. If we look back at spam, there was a point where it proliferated. People were sending money to “Nigerian princes.” It was overwhelming inboxes. Now spam still exists but in a manageable way.

“If we look back at spam, there was a point where it proliferated. People were sending money to ‘Nigerian princes.’ It was overwhelming inboxes. But we’ve gotten to a place where spam still exists but in a very manageable way.”

Carmichael: How did we get there?

Badanes: There was regulation put in place that said these certain behaviors are not acceptable. There were tech solutions, too. The tech companies figured out a way to decipher between the different kinds of emails that were coming in and probably used AI to determine the likelihood that something was authentic or not. But there’s a consumer responsibility too. I have a responsibility as a receiver of emails to think twice and use some thought before opening an email or an attachment. I didn’t do that on my own. I was taught that.

Carmichael: Where does the responsibility lie in terms of solutions?

Badanes: I think it’s a similar practice when it comes to disinformation defense and getting us to a place where it’s manageable. There are government responsibilities, technology company responsibilities, civil society responsibilities and responsibilities on employers training their employees. Then there is the consumer side where we should be more critical consumers of information.

Carmichael: How do we balance people’s wonder and worry when it comes to AI?

Badanes: I’ve been reflecting lately on the difference between this moment and when social media first came on the scene. We were looking at how great social media was going to be, particularly in our space of democracy. I think we over-indexed on the wonder and didn’t spend enough time on the worry. But I think people are saying, “I’m not going to make that same mistake again, so I’m going to over-index on the worry without really spending the time considering the wonder.” We need to learn not to worry more, but to find that balance. Let’s also consider what are not just the short-term concerns we have — of which I recognize there are many — but what are these longer-term concerns? It’s not too late for us to be able to figure them out and put guardrails in place.

|

← Read previous |

Read next → |

For further reading

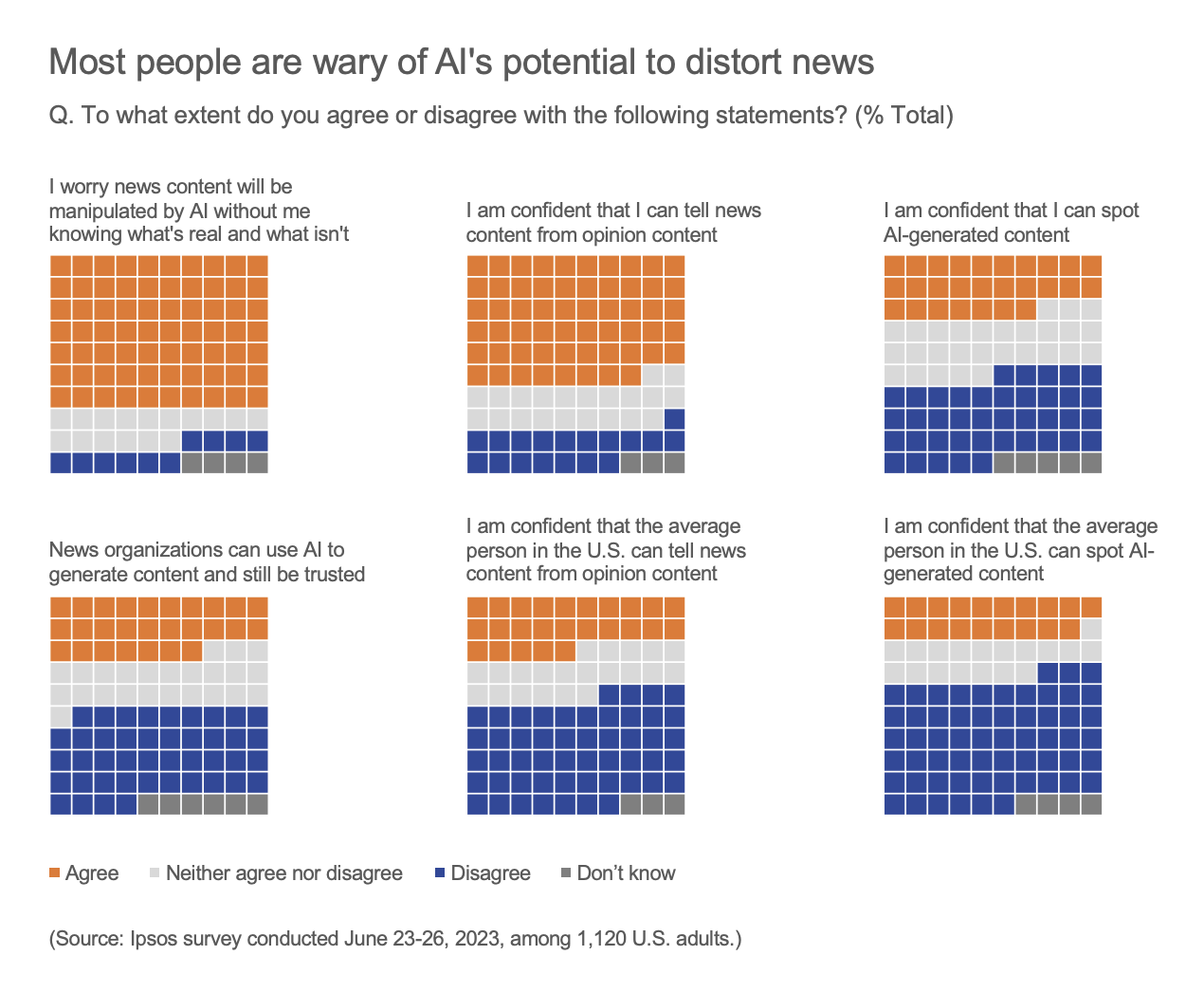

Americans hold mixed opinions on AI and fear its potential to disrupt society, drive misinformation