How we can build needed trust in AI through equity

As a futurist for Cisco Systems, Senior Visioneer Annie Hardy’s work is focused on the future of trust, the internet, expertise and work. When she thinks about artificial intelligence, she sees trust as a core issue. How do we build trust with each other? How do we build trust with customers? How do we build trust in a world where we can’t believe what we see or hear. The answer is tricky, and the stakes couldn’t be higher.

Matt Carmichael: When you think about the future, how is it different for a business-to-business (B2B) versus a business-to-consumer (B2C) company?

Annie Hardy: When I think of a B2B company versus a B2C company, the first thing I think of is the future of trust because of the way the consumer relationships and loyalty have shifted over many years. For instance, if we look back to 2008, 2009, when blockchain was created, that was a reaction to the problem of trust, that financial institutions were no longer trusted institutions.

Carmichael: How else does trust affect the market?

Hardy: Companies are now stepping in with their political values across both ends of the spectrum. You have companies that are very progressive and companies that stand for conservative ideals. With B2C products, it can be very personal. With B2B, it’s more institutional. Risk has a lot to do with the trust imperative for a B2B company versus a B2C company.

Generation Z considers the ESG [environmental, social, governance] values of a company as they’re considering a job and will decline a job offer if they don’t believe that the values and the mission of the company align with theirs. Business-to-human values are really going to be a critical component in whether somebody trusts a company.

“If we’re going to fill the pipeline with voices that are distinctive, we have to figure out what it looks like to fairly hire and train and what diversity actually looks like.”

Carmichael: There are cloud-based vs. device-based AI solutions. How does that affect privacy and security?

Hardy: The question of the regulations around any kind of technology depends on where you live. A great example would be GDPR in the EU or regulations like HIPPA in the U.S. protecting health information. What we see in the U.S. is highly regulated industries being regulated because the risk is high versus in the European Union [where] you have governments protecting people. Regulation for things like cloud-based computing or AI are going to align with the values of the culture.

Carmichael: What are the ethical risks of AI?

Hardy: When we look at companies doing responsible innovation and responsible AI, this is an ethos. It goes back to trust. Then you have, “We’re going to move fast, we’re going to lead the way, we’re going to bring innovation to people.” That’s a different ethos. That’s the way technology has always been.

Carmichael: That sounds like two very different futures.

Hardy: Yes, it’s contingent on the adoption of a specific ethos. If we adopt responsible AI, then we’re going to start talking about UBI [universal basic income] versus if we adopt innovation, what we’re going to see is the rise of agrarian societies because people can’t afford to live in cities. These are alternative futures contingent on what ethos is established and what trust looks like as a result.

Carmichael: What if we don’t build trust?

Hardy: What is the “prepper” equivalent of an anti-AI society? My concern is that we’re not going to be able to upskill in time. Everybody says AI’s going to take jobs away. In every other industrial revolution, more jobs were created than were lost. Part of this is fear out of a lack of understanding, which is very natural given how complex AI is.

Carmichael: What if people’s fear grows, or jobs disappear, and we don’t get UBI?

Hardy: We’ll see the growth of personal agriculture and societies that are based on agriculture rather than technology. People are going to go off-grid and become more self-sufficient. The cost of living in cities is pushing people out. Urban decentralization is already happening. All of that is a trust thing. People are just going to want to extract themselves from the system.

Carmichael: One way to reduce fear and build trust is to have more voices in the rooms where AIs are created. We know that. How do we do that?

Hardy: There are a lot of different things that are going to have to play into solving the bias and data problem. There are technology solutions, social, relational, corporate solutions and enterprise approaches that we can take. If we’re going to fill the pipeline with voices that are distinctive, we have to figure out what it looks like to fairly hire and train and what diversity actually looks like. The beauty is that with the rise of the low-code, no-code movement we can get people who aren’t necessarily experts at math to be a part of the data science or the artificial intelligence pipeline.

|

← Read previous |

Read next → |

For further reading

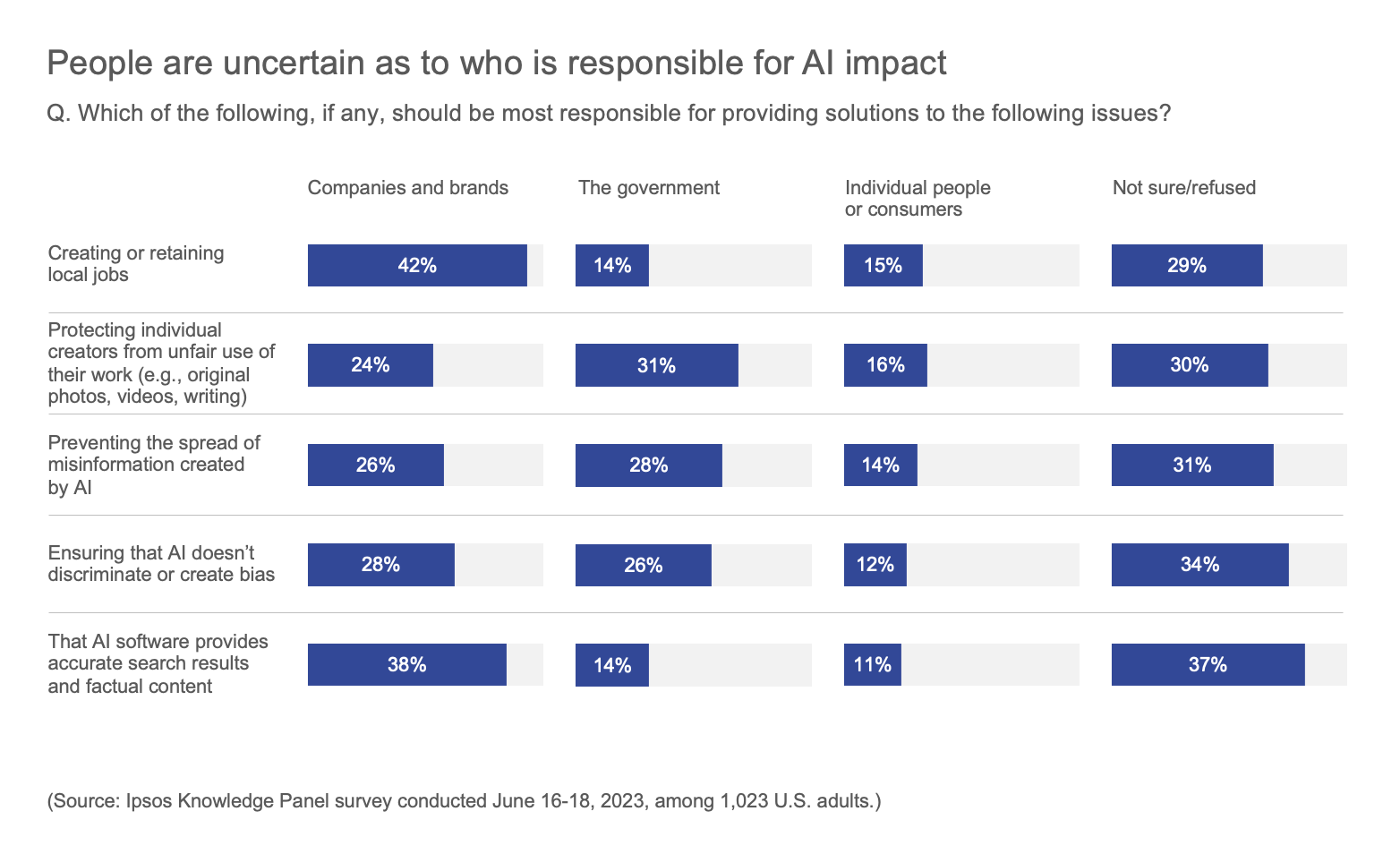

Few Americans trust the companies developing AI systems to do so responsibly