Why responsible AI will unblock our worries

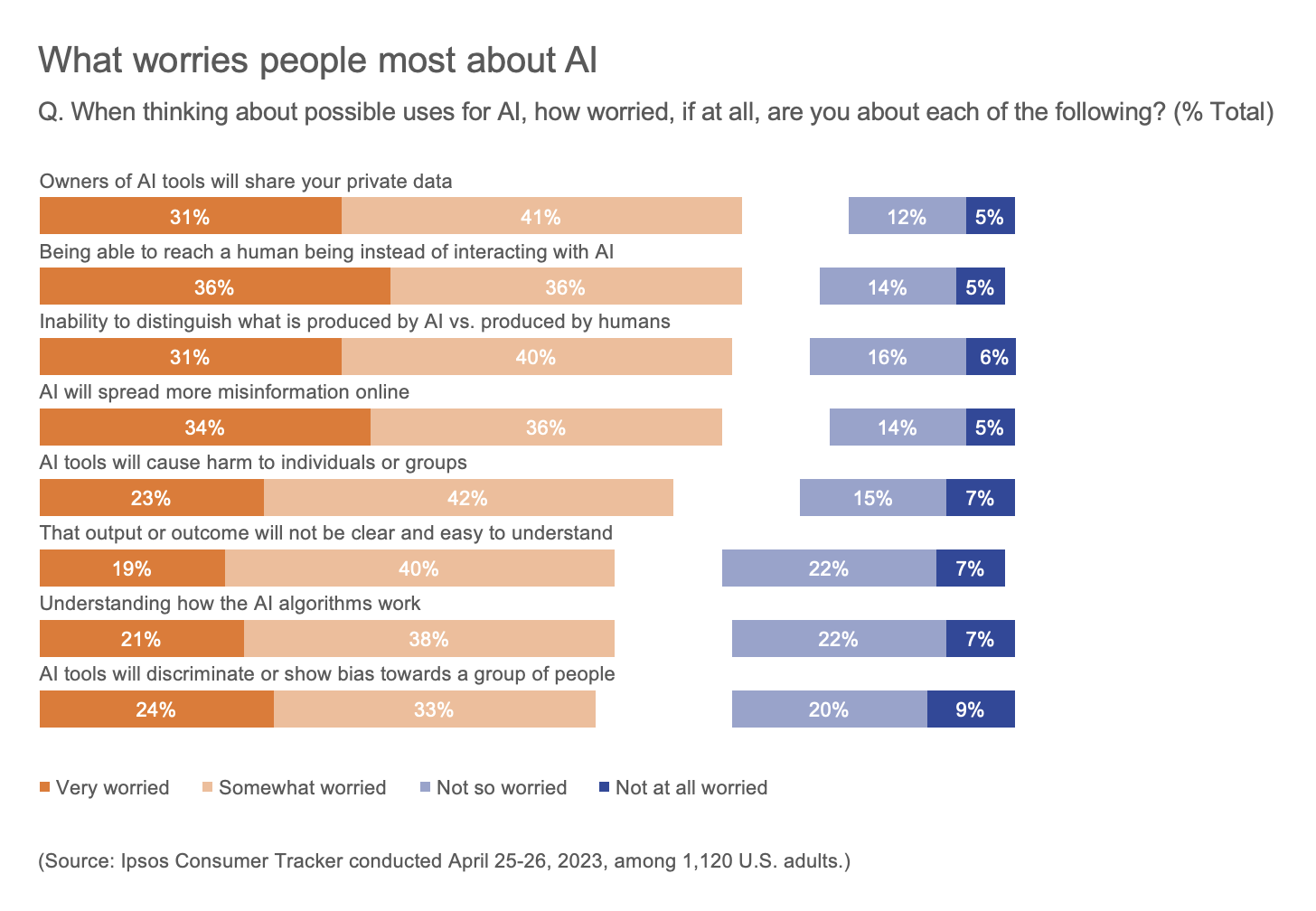

For those deploying artificial intelligence tools, it’s important to keep the humans in mind. Ipsos developed a FAST framework (fair, accountable, secure and transparent) that can guide ethical development. It’s based on Ipsos research, which shows that people want AI tools to be developed without bias to allow for developers to be responsible for their work; for data and privacy to be protected; and for it to be clear when and how AI is being used.

Product testing will be essential. A lack of guards against some of the most significant sources of AI risks can represent an existential risk for organizations, says Lorenzo Larini, CEO of Ipsos North America.

“The FAST framework builds in precautions that will help people feel safe in using AI without sacrificing speed.”

Public perception is shifting quickly. Now is the time to act and measure, full stop.

|

← Read previous |

Read next → |

For further reading

Most Americans want tech companies to commit to AI safeguards

Ipsos Top Topics: Artificial Intelligence

AI threatens humanity’s future, 61% of Americans say: Reuters/Ipsos poll