Why ethics should be at the center of new AI tech

It’s hard to tie Taryn Southern to one job — or even one career. She’s a former “American Idol” semi-finalist, a YouTube star and creator of the first pop album composed with artificial intelligence. She’s now helping explain one of the most complicated technologies in the world, brain-computer interfaces developed by Blackrock Neurotech. These experimental tools are helping people who have neuro-degenerative conditions regain some lost functions. She has a “front seat to the future,” she says. Here’s what she sees from that view.

Matt Carmichael: Where do you see brain-computer interfaces (BCI) going in the future?

Taryn Southern: Our hope is that people with paralysis or other neurological disorders can restore function through these devices. And that it’s as easy as going to their doctor and saying, “I want to have this surgery,” and it is reimbursed by insurance. They can take the device home and use it for eating or cooking or making art with the interface. I hope it’s a normal, commonplace thing for people to be treating these diseases through electrical means with BCI versus pharmaceutical means.

Carmichael: There are a lot of scary narratives in pop culture about BCI being used to control people. How do you combat that as a storyteller?

Southern: First, there’s a misunderstanding around how the technology works and how it interfaces with the brain. So, one area of my storytelling is clearing that up. We’re not reading people’s thoughts. That’s just not possible, at least in the current form of BCI. We are listening to a cluster of neurons out of 80 billion neurons, and we’re running that through machine learning algorithms to study one very specific thing: movement intention. The other part is highlighting the very human-centric ways that this technology is improving lives and showing the real people that are using this technology.

Carmichael: At some point will we see this technology used more generally to augment skill sets rather than treat disorders?

Southern: Theoretically, the technology has demonstrated feats that I think make it very plausible that humans could one day use these devices to augment their abilities. Augmentation is in the eye of the beholder. In Hollywood storytelling, people think about limitless productivity or enhanced memory. But one of the more interesting use cases of our technology now is the ability to provide sensation to people who’ve lost their sense of touch. It’s sort of an odd thing to consider in terms of augmentation, but we might end up with additional senses that we didn’t have before.

Carmichael: How do ethics fit into the AI discussion?

Southern: I’m not an ethicist, nor am I a philosopher. I was working in generative AI in 2016, and I saw how cool the possibilities of these technologies were. But I never guessed in a million years the capabilities that it would have in 2023. We’re forced to have ethics conversations really quickly. It’s critical that those are not just conversations, but that they’re built into the ethos of the technology.

Carmichael: So how do ethics fit into the neuroscience?

Southern: It’s the exact same thing. We just haven’t hit anything close to a critical mass. We’re talking about several dozen patients who are in very isolated, very controlled research studies. Ethics is the No. 1 component of constructing these studies. These are not yet available to the public. We're at an advantage right now because we can have these conversations before anything has been unleashed. We've had the learnings of several dozen patients in many different institutions to say, “What are the things that we've learned over 20 years of research?”

Carmichael: What are some of the things your ethics board considers and discusses?

Southern: It’s imperative that ethics are the foundational layer on top of which everything else is built. You have to learn how to ask the right ethics questions. There are certain ethical questions like privacy and security that are obvious. Then there are others that don’t become apparent until you’re in human trials and you’re in their home and you’re getting that feedback. In some ways that because BCI has been slow in comparison to other industries like AI we’ve had time to really approach it in a responsible manner. These are not questions that are going to work themselves out overnight. A lot of it also depends on how society integrates with the technology.

“These are not questions that are going to work themselves out overnight. A lot of it also depends on how society integrates with the technology.”

Carmichael: What can the AI community learn from neuroscience?

Southern: The lesson for generative AI is there are ways we can be testing these technologies with very small, limited populations to ensure that the engineers are asking the right questions in all the different areas that touch human lives.

Carmichael: On a scale from one to 10, with 10 being super freaked out and one being really hopeful about everything, where are you falling with AI today?

Southern: Can I exist in a very paradoxical view of being equally terrified and excited? I think I’m a nine on both sides.

|

← Read previous |

Read next → |

For further reading

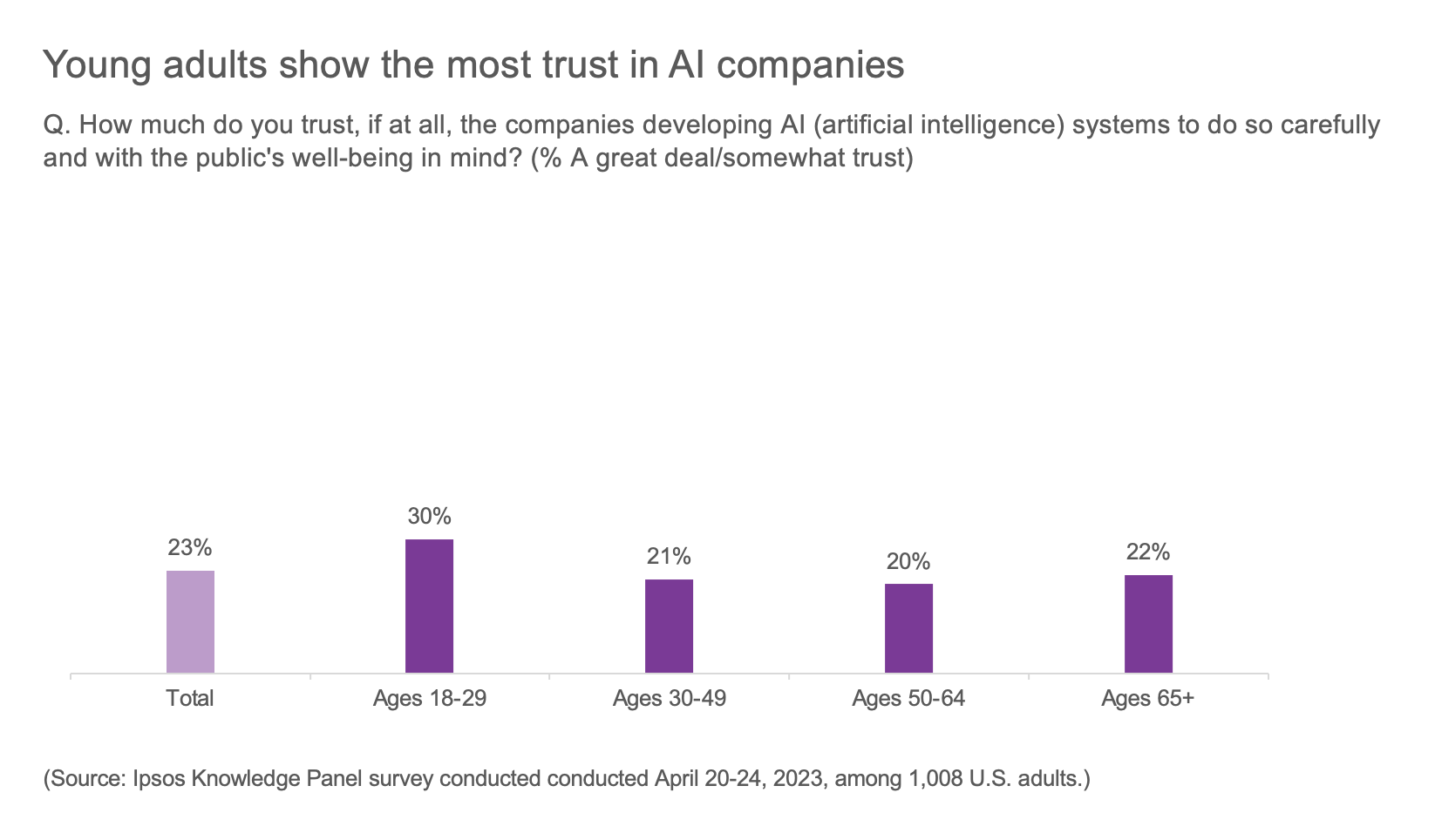

How do Americans feel about Generative AI? It’s complicated.

Most Americans want tech companies to commit to AI safeguards